Most AI teams discover the same thing after deployment: the model works well in most cases. The problems are in the edge cases, the exceptions, the decisions the model was not trained well enough to handle confidently.

Automating everything sounds efficient until a wrong decision reaches an end user. At that point the cost is not just one error. It is trust.

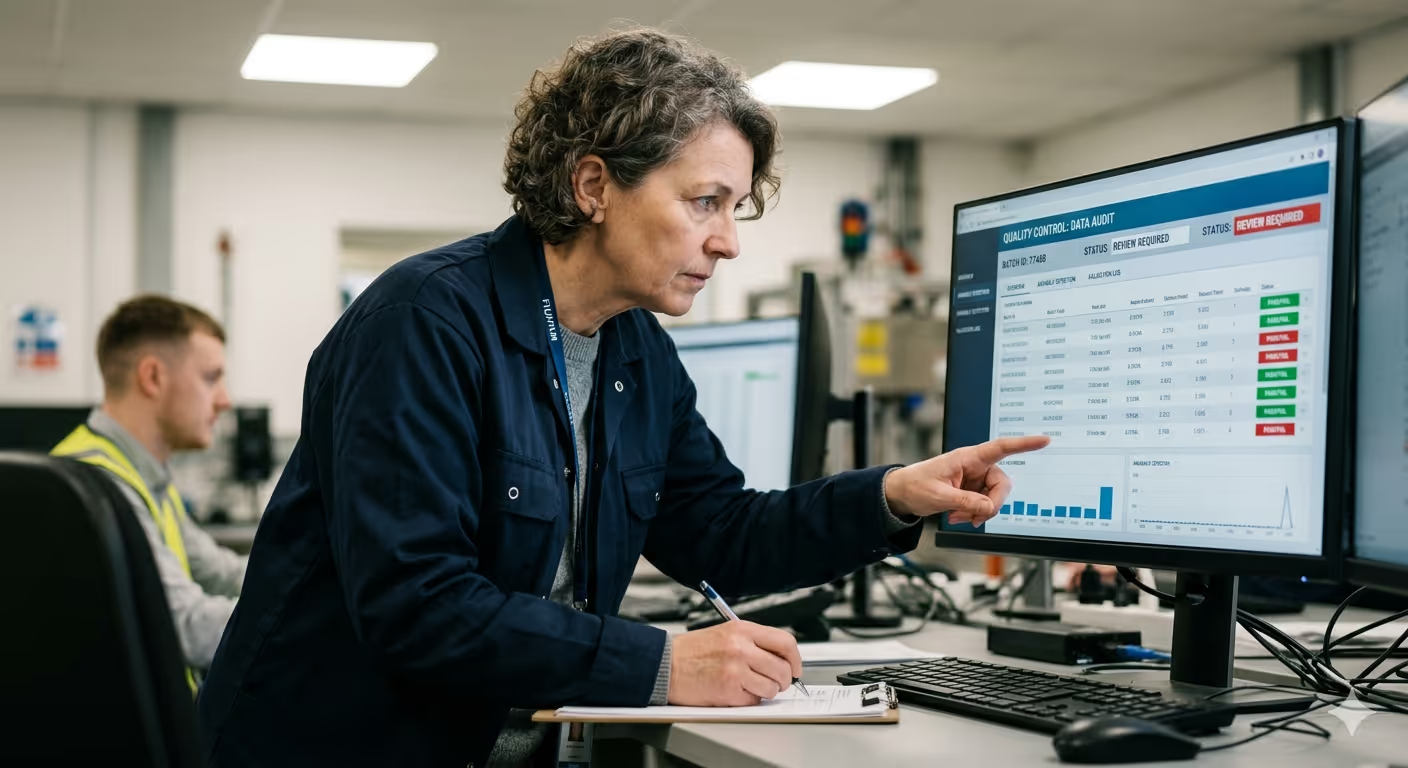

We provide the human layer between your model output and your end user. Our reviewers catch what automated systems miss, flag edge cases, and maintain the audit trail that makes AI deployments defensible.

Dedicated teams. ISO 27001 certified. Operational within 72 hours of scope agreement.